Hi! I'm Roman Bachmann, a Machine Learning Research Scientist at Apple. My research is focused on building scalable any-to-any multimodal foundation models for world modelling and visual reasoning. My goal is to build adaptable world priors that enable quick understanding of the environment and allow for global, out-of-sight reasoning.

Previously I was an EPFL PhD student at VILAB, advised by Amir Zamir. I received my M.Sc. degree in Data Science at EPFL, where I also completed my B.Sc. in Computer Science. During my studies, I interned as a research scientist at Apple and RIKEN AIP.

2025

2023

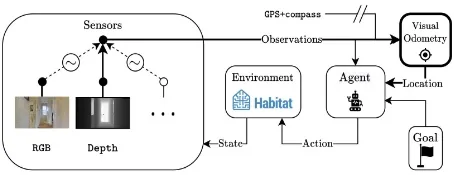

Modality-invariant Visual Odometry for Embodied Vision →

2023 Conference on Computer Vision and Pattern Recognition

2022

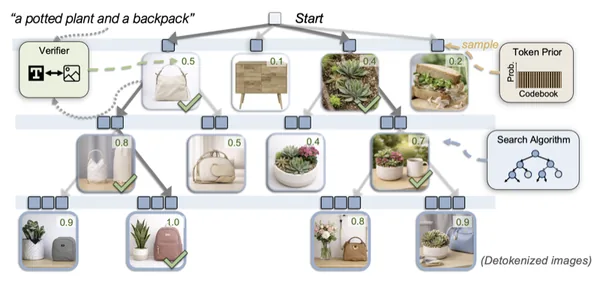

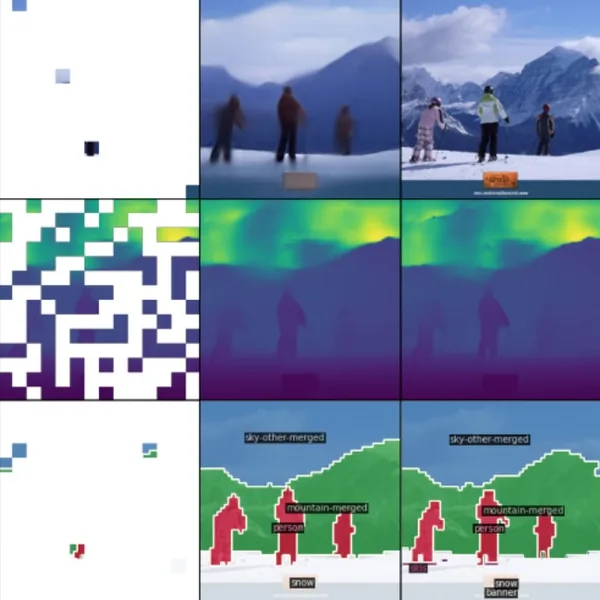

MultiMAE: Multi-modal Multi-task Masked Autoencoders →

2022 European Conference on Computer Vision

2021

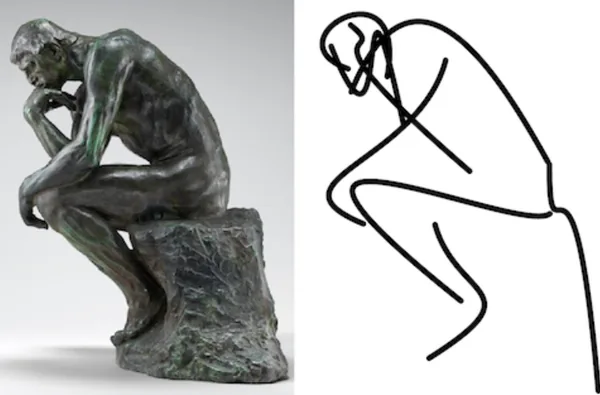

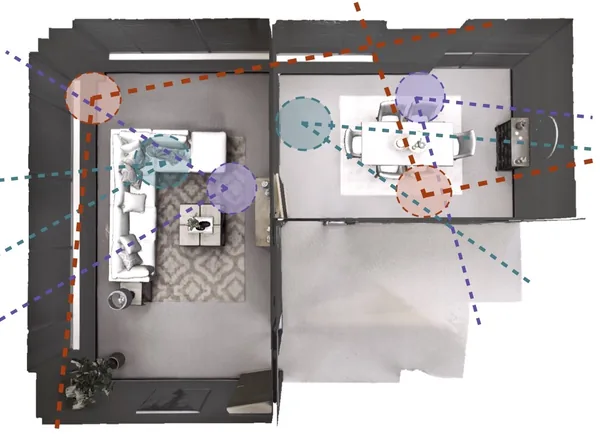

Omnidata: A Scalable Pipeline for Making Multi-Task Mid-Level Vision Datasets from 3D Scans →

2021 International Conference on Computer Vision

2020

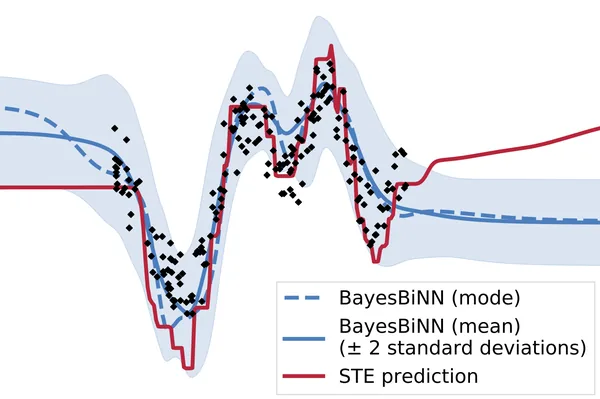

Training Binary Neural Networks using the Bayesian Learning Rule →

2020 International Conference on Machine Learning

2019

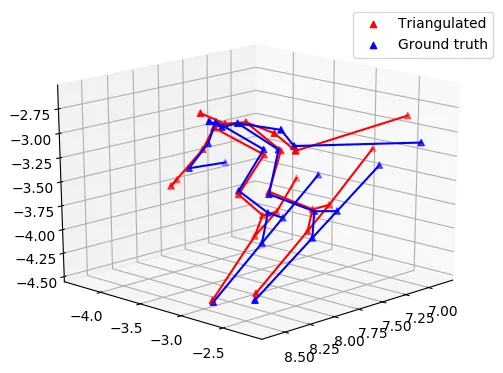

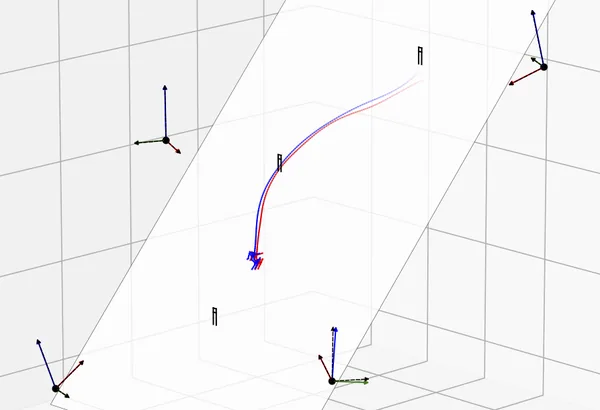

Motion Capture from Pan-Tilt Cameras with Unknown Orientation →

2019 International Conference on 3D Vision

★ Selected for oral presentation

Global Motion Estimation from Pan-Tilt Cameras →

2019 Central European Seminar on Computer Graphics (non-peer-reviewed)

★ Voted best presentation and third best paper

Automatic 3D motion capture in alpine skiing using deep learning and computer vision →

8th Int. Congress on Science and Skiing (non-peer-reviewed)

★ Won first place in the Young Investigator Award competition