ViPer: Visual Personalization of Generative Models via Individual Preference Learning

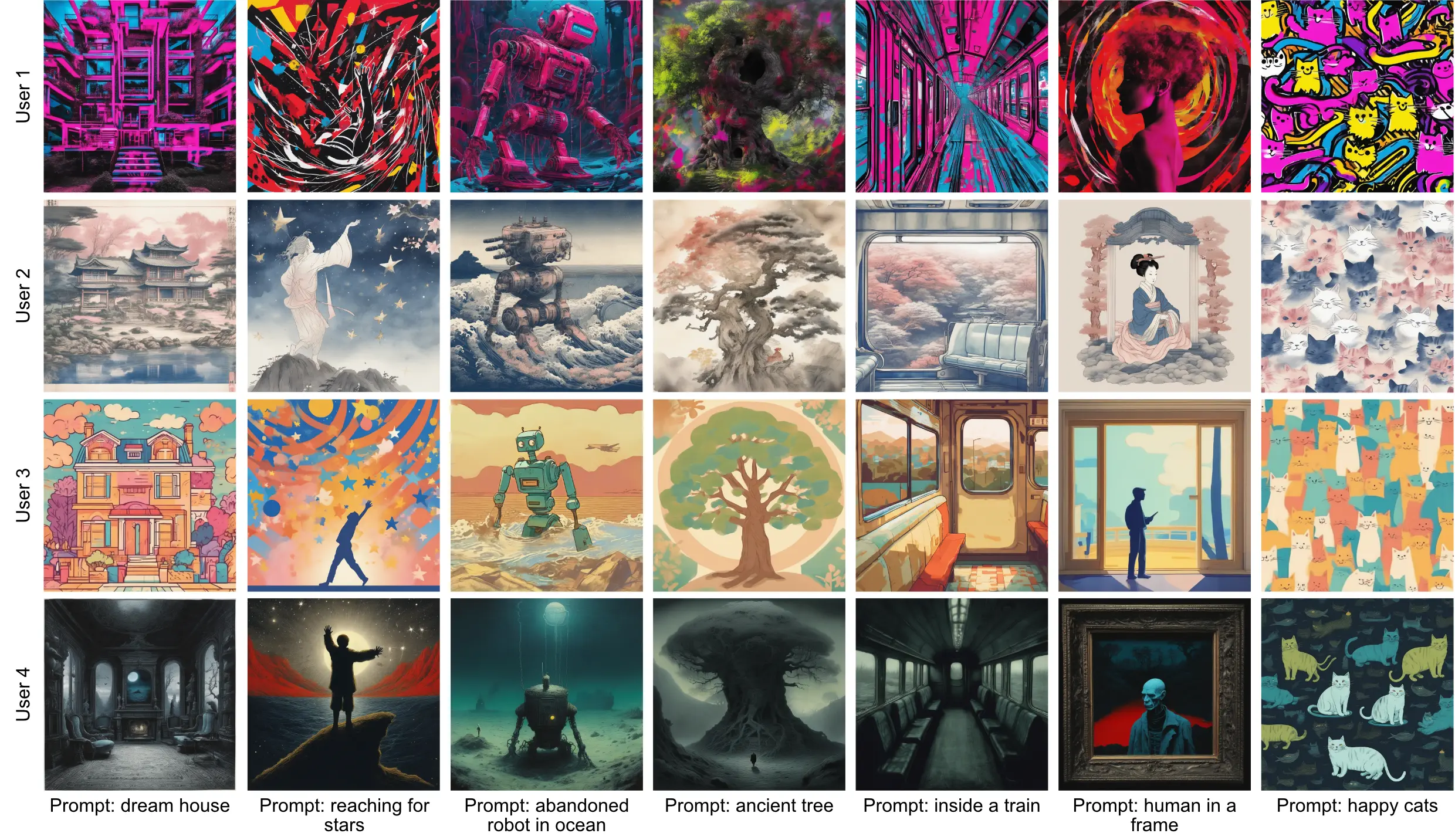

Different users find different images generated for the same prompt desirable. This gives rise to personalized image generation which involves creating images aligned with an individual’s visual preference. Current generative models are, however, tuned to produce outputs that appeal to a broad audience are unpersonalized. Using them to generate images aligned with individual users relies on iterative manual prompt engineering by the user which is inefficient and undesirable.

We propose to personalize the image generation process by, first, capturing the generic prefernces of the user in a one-time process by inviting them to comment on a small selection of images, explaining why they like or dislike each. Based on these comments, we infer a user’s structured liked and disliked visual attributes, i.e., their visual preference, using a large vision-language model. These attributes are used to guide a text-to-image model toward producing images that are tuned towards the individual user’s visual preference. Through a series of user studies and large language model guided evaluations, we demonstrate that the proposed method results in generations that are well aligned with individual users’ visual preferences.

Please see our GitHub repository for code and pre-trained models, our HuggingFace demo to play around with the model, and our website for additional visualizations!